As the size of the available data increases, massive data sets cannot be stored in their entirety in memory of a single machine. Furthermore due to the small amount of memory and computation power available on a single node, one needs to distribute the data and computation among multiple machines when processing tasks.

However, transferring the big data is very expensive. In fact, it is more expensive than the computations on the datasets. Thus, in the distributed model, the amount of communication plays an important role in the total cost of an algorithm and the aim is to minimize the amount of communication among processors (CPUs). This is one of the main motivations to study the theory of Communication Complexity, which originates from Big Data processing.

Communication Complexity (CC) has a rich theory behind it and exhibits a beautiful mathematical structure which can be explored by using various mathematical tools.

In fact, Communication Complexity can be applied to many different problems from theory of computation to other related fields, making this area a fundamental key in our understanding of computation.

CC studies the amount of communication bits that the participants of a communication system need to exchange in order to perform certain tasks. A very simple model for exploring this type of questions was proposed by Yao et. al. in 1979[1]. In their model there are two parties, denoted as Alice and Bob and their goal is to compute some specific function f(x, y), which x is input for Alice and y is input for Bob. The results proven in this model can be generalized to more complicated scenarios as well.

Although, at first glance it seems that the field of communication complexity is mostly related to problems in which explicit communication is involved, such as distributed computing, the fact is that its applications are much broader, some of which communication does not even appear in the problem. Examples of such problems are: designing Boolean Circuits, Networks and Data Structures, in particular with regards to computing the lower bounds on the related cost in these type of problems.

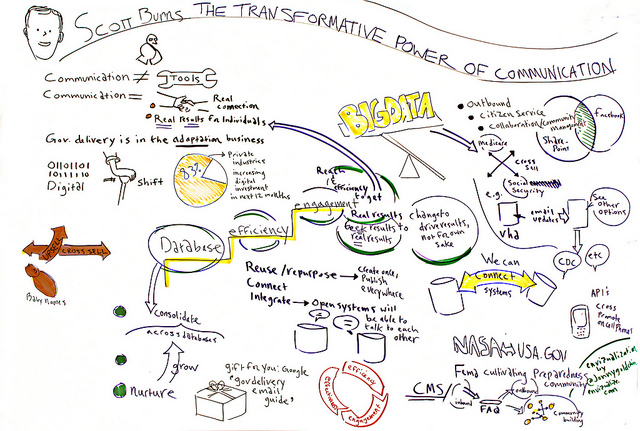

Image licensed in CC 2.0 by Jonny Goldstein

It might be surprising and odd that CC can be applied to problems in which communication is not involved. Thus, here I discuss about a few basic problems which communication complexity plays a key role:

Distributed Learning via CC:

Let’s consider a framework where the data is distributed between different locations and parties (each having an arbitrary partition of an overall dataset) and our main goal is to learn a low error hypothesis with respect to the overall distribution of data, using as small amount of communication and as few rounds of communication, as possible, i.e. in distributed learning we are looking for applicable techniques for achieving communication-efficient learning. Different problems such as classification, optimization and differential privacy have been discussed in this setting in some recent work [2, 3, 4].

Data Outsourcing and Streaming Interactive Proofs via CC:

When the dataset is fairly large, the data owner cannot retain all the data and so the storage and computation needs to be outsourced to some service provider. In such situations, data owners wants to rest assured that the computations performed by service provider are correct and complete. We can model this scenario by a verification protocol over data stream, in which there is a resource-limited verifier V and more powerful prover P. The verifier starts a conversation with the prover which does the computations and solves the problem. Then, the prover sends a proof to show the validity of his answer and convince the verifier to accept its results. The streaming data models the incremental pass over the data by the verifier as it sends the data to the cloud. In this setting, verifier just requires tracking logarithmic amount of the data, but instead this requires the communication of information among the players. Here, the goal is to design an interactive proof system [5] with logarithmic communication to verify each query, i.e. after seeing the input and the proof, the verifier should be able to verify the proof of a correct statement with high probability, and reject every possible proof which is presented for a wrong statement. Note that here we consider a more powerful verifier by allowing probabilistic verification. This way the problem of verification in cloud computing for massive data streams links to the communication complexity theory and Arthur-Merlin games [6]. There has been a series of works on streaming interactive proofs for different problems, which can be found in [7].

Data Structure Lower Bound via CC:

Here the golden key is to discover the link between communication complexity and data structure and then use this connection to prove lower bounds for data structures supporting certain type of queries. For example, consider we want to design an efficient data structure for answering the queries of type “is i in S?”. To evaluate the quality of the implementation, there are two measures: (1) space which is the total number of memory cells which is used; and, (2) time which is the number of accesses (reads or writes) to the memory needed to accomplish a task and answer a query. This data structure problem can be viewed as a communication complexity problem by setting two parties: One party (Alice) gets as an input a set S and the other party (Bob) gets as an input an element i. The goal is to check whether i is in S. It can be shown that any implementation for the data structure problem can be reduced to a protocol in communication complexity problem in which complexity is related to the complexity of the data structure and as a result, bound for the communication complexity implies the time-space trade-off for the corresponding data structure.

A simple scenario to show this connection is as follows: suppose there is a cell-probe algorithm [8] for a problem which uses a data structure with space s and t queries. This results in a communication protocol for the same problem with communication t (w + log s) in the following way: when the processor asks for the contents of a memory cell, this can be done by Alice sending a message of log s bits, indicating the index of the desired cell and Bob answers with w bits to describe the content of the cell and this scenario will be done in t rounds of communication. A nice study of communication complexity techniques for computing data structure lower bounds can be found in [9].

Property Testing Lower Bound via CC:

Property testing was discussed in a previous [post] as a type of sublinear algorithms. To recap, in here our goal is to formalize the question “what can be determined about a large object when we have limited access to it?”. Studies show that there is strong connection between testing and communication complexity [10]. The biggest similarity is that both involve parties (tester and communication players) with unbounded computational power and restricted access to their input.

In [10] they consider the case where the large object is the Boolean function f on n input bits and the goal is to decide whether this function has the property P. A variety of techniques and algorithms have been developed for testing Boolean functions, but what distinguishes this work is that they propose techniques for reducing property testing to communication complexity and use this connection for proving lower bounds in certain types of testing problems.

The main idea behind the reduction from testing to communication complexity problem is to set up a communication game as follows: Alice has a function f and Bob has a function g as inputs and they want to check if the joint function h, which is some combination of functions f and g, has a particular property P or is \epsilon-far from all the functions which have the property P. In this setting, now the link is that the number of required queries for testing whether function h has this property will be related to the number of bits which Alice and Bob need to communicate to do this task.

As you can see from what we discussed above, the cases in which communication is not explicitly used, communication complexity is used for proving lower bounds. The communication complexity framework has been well-studied and there are several basic problems which are known to require a large amount of communication. Then, the hardness of these and related problems has been used to obtain lower bounds in many areas such as streaming algorithms, circuit complexity, data structures, proof complexity and property testing. The basic idea used here is as follows: in some specific problem that we would like to bound, instead of starting from “scratch” by studying the structure of the problem, we try to find a connection between that and a hard communication problem in which probably the communication complexity is well known . If we can find such a connection, then we can reduce the work involved for proving new bounds, or give simpler proofs of known bounds.

Now maybe the big question here is that why we care about computing lower bounds and what is important about it?

Observe the main difference between upper bounds and lower bounds [11]: Upper bounds show the existence of an efficient solution, while lower bounds must say something about all possible solutions even those which no one has thought of yet. So it’s not surprising that proving some non-trivial lower bound is significantly harder than obtaining some non-trivial upper bound. The natural goal when proving lower bounds is of course to show that the upper bounds we know for some problem are optimal, i.e. there cannot exist a faster data structure than the one we already have.

Now think of big data: after decades of research, we arrived at efficient solutions for most of the well-known problems in the field, i.e., the upper bounds. However, since we are dealing with massive data sets, even a small improvement in the performance of any key algorithms or data structure, would have a huge impact.

Thus researchers strive to improve the known solutions. But when does it end? Can we always improve the solutions we have? Or is there some limit to how efficiently a data structure problem can be solved? This is exactly the question addressed by lower bounds. Lower bounds are mathematical functions putting a limit on the performance of algorithms and data structures [11].

As the concluding remark, it seems that theory of communication complexity and techniques for proving lower bounds serve as two important tools for improving our power to design efficient algorithms and data structures for massive data.